Creating real-time predictions

Use your ML deployment to predict future outcomes on new data. You create real-time predictions using the real-time prediction endpoint in the Machine Learning API.

Predictions can be made in real time, such as real-time decisions about customer discounts at checkout. When predictions are generated, you can load the predictive insights into a Qlik Sense application. This lets you visualize and interact with the data and create what-if scenarios.

The real-time predictions API is deprecated and replaced by the real-time prediction endpoint in the Machine Learning API. The functionality itself is not being deprecated. For future real-time predictions, use the real-time prediction endpoint in the Machine Learning API. For help with migrating from the real-time predictions API to the Machine Learning API, refer to the migration guide on the Qlik Cloud developer portal.

Creating real-time predictions with the API

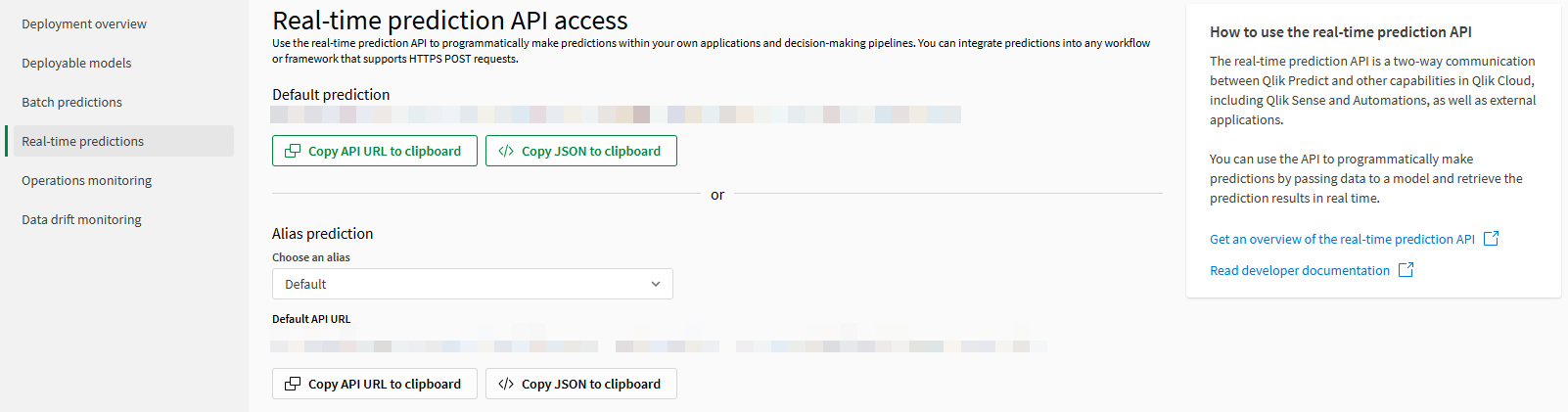

The Real-time predictions pane in the ML deployment interface gives you access to the real-time prediction endpoint in the Machine Learning API. This pane is visible if the default model in the deployment is activated and you have the required permissions for real-time predictions.

The real-time prediction endpoint s a two-way communication between Qlik Predict and other capabilities in Qlik Cloud, including Qlik Sense and Automations, as well as external applications. You can use the endpoint to programmatically make predictions by passing data to a model and retrieve the prediction results in real time.

Real-time predictions pane

Do the following:

-

Open the Real-time predictions pane in an ML deployment.

-

Use the copy buttons to copy the applicable URL or JSON to your clipboard (for information about selecting which alias to use, see Working with model aliases in real-time predictions).

-

Incorporate calls to the Machine Learning API into your own applications, or manually call the API using your desired tool.

For real-time endpoint specifications for the Machine Learning API, see Generate predictions in a synchronous request/response.

For more general information about the Machine Learning API, see Machine Learning API.

Requirements for real-time predictions

-

An API key is required to use the real-time prediction endpoint. Users must have the Manage API keys permission in the tenant to generate an API key. See Generating and managing API keys.

-

The source model for the ML deployment you are using must be activated for making predictions. For more information, see:

-

You need the correct permissions for working with ML deployments and predictions. For more information, see Requirements and permissions.

Working with model aliases in real-time predictions

You can add multiple models to an ML deployment. A system of aliases is used in ML deployments to allow for dynamic swapping of models for use in predictions. For more information, see Using multiple models in your ML deployment.

When you copy your URL or JSON, the following options are available:

-

Default prediction: Use this option to generate predictions from the default alias in the ML deployment.

-

Alias prediction: Use this option when you want to generate predictions from any additional aliases you have added to the ML deployment. Select an alias using the drop down menu, and then copy the URL or JSON.

Viewing data drift and prediction event details

After you run a real-time prediction, open the ML deployment and explore the Operations monitoring and Data drift monitoring panes. In these views, you can evaluate:

-

The level of data drift for each feature involved in the prediction. The comparison is performed between the data you send to the Qlik Predict real-time prediction API and the training dataset.

-

Details about the prediction event, such as whether it succeeded or failed, and how many predictions it generated.

For more information, see: