Indexing phone reviews with embeddings and vector database

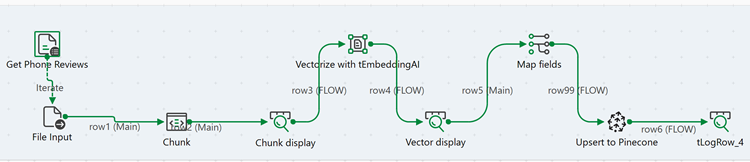

This Job reads phone review text files from a folder, splits the content into smaller chunks for better analysis, generates vector embeddings using Azure OpenAI, and stores them in a Pinecone vector database to enable semantic search.

Before you begin

Before running this Job, ensure you have:

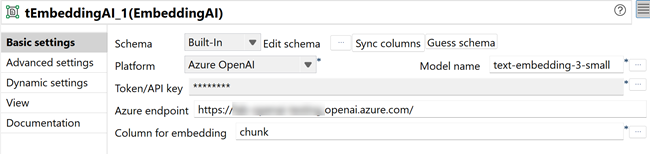

- An active Azure OpenAI account with access to the text-embedding-3-small model.

- Your Azure OpenAI API key and endpoint configured.

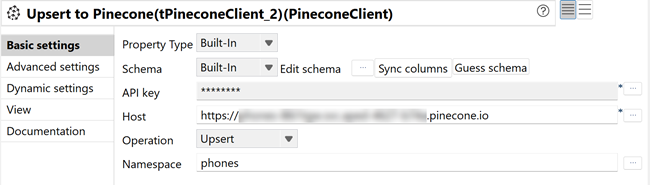

- A Pinecone account with an index created for storing embeddings.

- Your Pinecone API key and host endpoint configured.

- Downloaded the archive file tembeddingai-tpineconeclient_phone-review-files.zip and extracted the LG.txt and Iphones.txt files.

- Created the directory <folder_path>/phone-reviews/ with the phone review text files.

Linking the components

Procedure

Configuring the components

About this task

Procedure

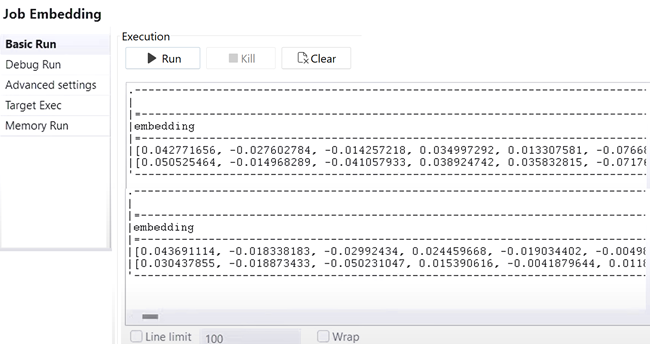

Executing the Job

Procedure

- Press Ctrl+S to save the Job.

- Press F6 to execute the Job.

Results

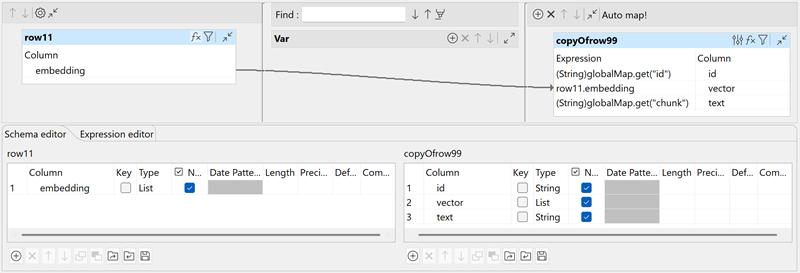

The Job reads the phone review files, chunks the text, generates embeddings using Azure OpenAI, verifies metadata transfer through tMap, and upserts the vectorized data into Pinecone for semantic search.

The phone review embeddings stored in Pinecone enable semantic search queries, allowing users to find relevant reviews based on meaning and context rather than exact keyword matches. The text chunking ensures more precise search results and better analysis capabilities.