Qlik Answers® - Security Architecture and Controls

Introduction

This topic explains how Qlik Cloud handles data when AI capabilities such as Qlik Answers, semantic search, and natural language insights are used. It is intended to help security and compliance teams understand the technical controls that apply when inference requests are routed across AWS regions - known as Cross-Region Inference.

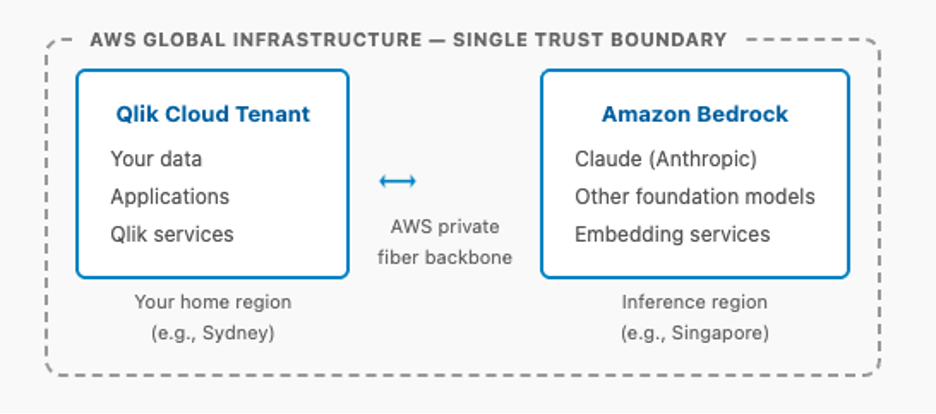

Qlik Cloud runs on AWS, and AI inference is provided by Amazon Bedrock — also on AWS. All processing occurs within a single AWS security boundary. No third-party cloud providers or public internet routes are involved.

Architecture Overview

Qlik Cloud is a native AWS SaaS platform. Its AI capabilities are powered by Amazon Bedrock, AWS's fully managed service for accessing foundation models, including Claude from Anthropic. Because both Qlik Cloud and Bedrock operate on the same AWS infrastructure, this is an AWS-to-AWS architecture rather than a multi-cloud integration. The remote region used in Cross Region inference is referred to as the inference region throughout this document.

Your Qlik Cloud tenant resides within the AWS network at all times. When AI inference occurs in a different region, data moves between two components of the same AWS infrastructure — connected by AWS's private fiber backbone — rather than being sent to an external vendor over the internet.

This architecture means you have a single trust boundary, a single Data Processing Agreement (DPA), and a unified compliance framework, rather than the multiple agreements and reconciled policies required in traditional multi-cloud AI deployments.

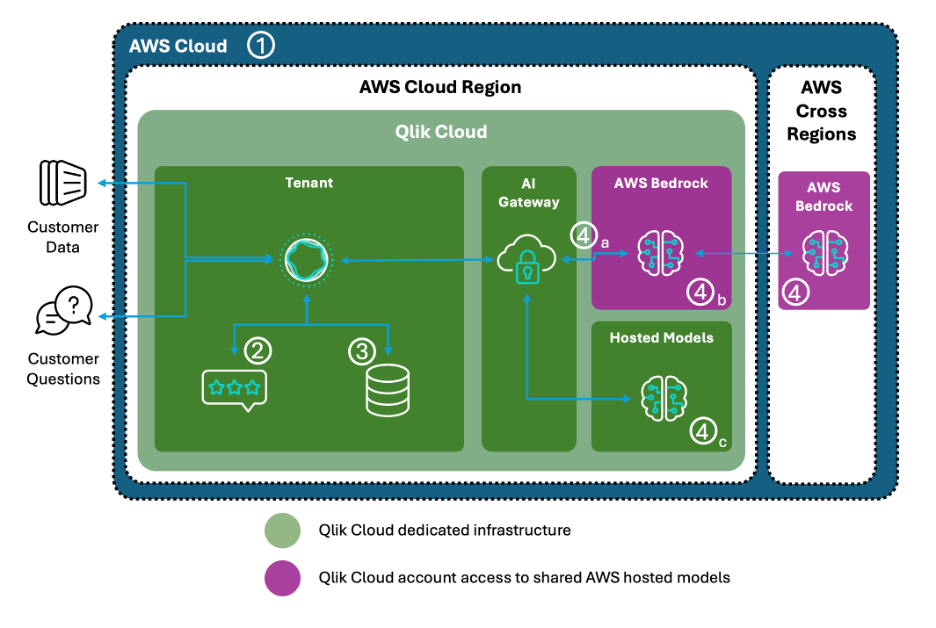

In more detail:

- All traffic stays within the same AWS Virtual Private Network.

- Questions and responses are logged within the customer tenant using tenant encryption.

- Indexed customer data for applications and knowledge bases are encrypted at rest in the index with tenant encryption.

- Requests to generate AI representations are made to the LLM model through cross-region inference:

- Data is encrypted in transit via the secure AWS network.

- No data is retained by AWS or AI model providers; no customer data is used to train LLMs.

- Some AI models are hosted within the Qlik Cloud VPC.

How Cross-Region Inference Works

The following describes what happens when a user action initiates an AI request and the inference request is routed from a home region (for example, Australia) to an inference region (for example, Singapore). While the example below relates to Qlik Answers, the process is the same for all AI services using cross-region inference.

| Step | Description | Data State | Example |

|---|---|---|---|

| 1 — User query (home region) | User asks a question in Qlik Answers. Qlik retrieves relevant data from the application and holds it in memory. | In memory – home region | User asks: "What were our top sales drivers last quarter?" Data in memory: [CustomerID, Revenue, Product, Region...] |

| 2 — Construct prompt (home region) | Qlik assembles the inference payload: the user's question, relevant contextual data from the app, and system instructions. Payload exists as plaintext in memory at this stage. | Plaintext – in memory only | { "question": "What were our top sales drivers...", "context": { "Q3_sales": [ {"Product": "Widget A", "Revenue": 500000}, {"Product": "Widget B", "Revenue": 350000} ] } } |

| 3 — Encrypt & transmit | TLS 1.3 encryption applied to entire payload. IAM role authentication established. Transmitted over AWS private fiber backbone — never the public internet. | TLS 1.3 encrypted – AWS private network | Home Region ──[AWS Private Network]──► Inference Region — ciphertext only, no plaintext in transit |

| 4 — Arrive at Amazon Bedrock (inference region) | TLS session established and verified. IAM credentials validated. Request payload decrypted by Bedrock so the model can process it. | ⚠ Plaintext at inference endpoint – required for model processing | Decrypted request now in Bedrock memory: { "question": "What were our top sales drivers...", "context": { ...original data... } } |

| 5 — AI model inference (in memory, ephemeral) | Bedrock passes plaintext to AI model. Model processes request in memory only. No disk writes. Inference duration: 1–5 seconds. Natural language response generated. | In memory only – 1 to 5 seconds | Model response: "Based on the Q3 data provided, Widget A was the top sales driver with $500K revenue, primarily from the APAC region..." |

| 6 — Purge memory & return response | Original request data immediately purged from memory. Response encrypted with TLS 1.3 and sent back to home region via AWS private network. No data retained in the inference region. | TLS 1.3 encrypted – no data retained in inference region | Inference Region ──[AWS Private Network]──► Home Region — encrypted response only, original request data gone |

| 7 — Display results (home region) | Response decrypted by Qlik Cloud and rendered to user in natural language. User sees insights without raw data exposure. | Home region – response displayed to user | User sees: "Widget A was your top sales driver last quarter with $500K in revenue." |

Common Misconceptions

The following table addresses questions that frequently arise during security assessments of Qlik Cloud's AI features.

| Misconception | Reality |

|---|---|

Data leaves AWS and goes to Anthropic's servers. |

Incorrect. Claude is hosted on Amazon Bedrock, which runs entirely on AWS infrastructure. Anthropic does not operate the servers used for Bedrock customers. |

Cross-region means data goes over the public internet. |

Incorrect. AWS owns and operates a private fiber optic network connecting its regions globally. Traffic between regions never traverses public internet routes. |

AI services can access our data at any time. |

Incorrect. Data is transmitted only during an active inference request (typically 1–5 seconds), processed in memory, then immediately purged. There is no standing access to your data. |

Our data is logged and stored in the inference region. |

Incorrect. Amazon Bedrock logs metadata only — timestamps, model version, token counts, and error codes. Data content is never written to logs. |

Encryption means our data is always protected, including during inference. |

Requires context. Encryption protects data in transit and at rest, but AI models must operate on plaintext — decryption occurs at the inference endpoint. This is a fundamental requirement of any AI service, not specific to Qlik Cloud. |

Security Controls

Network Controls

All cross-region traffic between Qlik Cloud and Amazon Bedrock travels over AWS's private fiber optic backbone. This network is owned and operated by AWS and is separate from the public internet — there is no BGP routing through third-party internet service providers and no mixing with public internet traffic.

Connectivity between services uses AWS PrivateLink or VPC peering. VPC security groups restrict traffic to authorized endpoints, and network ACLs provide subnet-level filtering.

Encryption

All data in transit between Qlik Cloud and Bedrock is encrypted using TLS 1.3. Each session uses unique encryption keys, and certificate-based authentication prevents man-in-the-middle attacks.

Data at rest within your Qlik Cloud tenant is encrypted using AES-256, with keys managed through AWS Key Management Service (KMS). Customer-managed keys (BYOK) are used if configured by the customer.

Important: While data is encrypted in transit, it must be decrypted at the inference endpoint for the AI model to process it. This is an unavoidable requirement of all AI inference services. Encryption cannot be maintained end-to-end during active model processing.

Access Controls

Qlik Cloud authenticates to Amazon Bedrock using AWS IAM roles with temporary, automatically rotated credentials — not long-lived API keys. The principle of least privilege is applied throughout. End users never interact directly with Bedrock; all requests are mediated by Qlik Cloud, which enforces your existing access controls including role-based access, row-level security, and section access.

Data Handling and Retention

Data processed in an inference region exists in memory only for the duration of the inference request — typically 1 to 5 seconds. After the response is generated, memory is immediately cleared. No disk writes of your data occur in inference regions. Data passed to the inference region does not identify the user, customer, or the license related to the request.

Amazon Bedrock does not log data content. Only operational metadata is captured: timestamps, model version used, token counts, and error codes. Your data is never used to train or fine-tune AI models. This is contractually guaranteed in the AWS Data Processing Agreement.

Compliance and Audit

Because Qlik Cloud and Amazon Bedrock operate within the same AWS infrastructure, they share a unified compliance posture. See Qlik Trust and Security for currently held compliance certifications.

Data Storage by Location

Your data is only ever stored in your home region. The inference region performs ephemeral, in-memory processing only.

| Data type | Home region | Inference region | Retention |

|---|---|---|---|

| Raw application data | ✅ Stored | ❌ Never stored | Permanent in home region |

| User questions | ✅ Stored | ❌ Not stored | Session-based only |

| Inference request payload | Transient during API call | In memory only (1–5 sec) | Purged immediately after inference |

| AI model response | ✅ Stored | ❌ Not stored | Displayed to user; session-based |

| Embedding vectors (semantic search) | ✅ Stored for search | In memory during generation only | Permanent in home region; purged in inference region |

| Data content in logs | ❌ Not logged | ❌ Never logged | N/A |

Storage vs. in-memory processing: Storage means data is written to disk and persists across server restarts. In-memory processing means data is held in RAM temporarily and is gone when the process completes or the server restarts. Your data is only ever stored in your home region.

Amazon Bedrock as a Security Layer

Amazon Bedrock is AWS's fully managed service for accessing foundation models from multiple AI providers. When Qlik Cloud uses Large Language Models via Bedrock, the model is hosted on AWS infrastructure — not on the model vendor's own servers. The model vendor does not operate the infrastructure for Bedrock customers and does not have access to your inference requests or data. AWS handles all provisioning, scaling, security, and networking.

This is architecturally significant. Accessing Claude directly via Anthropic's API would involve data leaving the AWS security boundary and traversing the public internet to Anthropic's servers, under a separate compliance framework and DPA. Using Bedrock keeps the entire flow within AWS, under a single governance framework.

Data isolation guarantees: Bedrock enforces a no-training policy — your data is never used to improve or fine-tune the underlying models. This is contractually enforced through agreements between AWS and its model providers, including Anthropic.

Enterprise governance features: Bedrock provides model version pinning, metadata logging to CloudWatch, VPC integration for network isolation, and configurable guardrails for content safety.

Compliance inheritance: Bedrock inherits all AWS compliance certifications. For security assessments, this means evaluating AWS's security posture — which organizations already trust for Qlik Cloud — rather than assessing a separate external AI vendor.

Why Cross-Region Inference Is Necessary

Cross-region AI inference is a practical requirement arising from the hardware constraints of modern large language models, not a product design preference.

Large language models require specialized GPU hardware — particularly NVIDIA H100 and A100 GPUs — that is in limited global supply. AWS cannot provision Bedrock inference capacity in every region simultaneously. New data centers take 2–3 years to build, and GPU availability lags further behind. As a result, Bedrock capacity is concentrated in a small number of high-capacity regions.

Even in regions where capacity exists, cross-region routing is necessary for high availability. During peak business hours, a regional Bedrock deployment may be highly utilized. Routing to a different region ensures consistent response times and prevents timeouts.

Example: At 10 AM AEST (peak business hours in Sydney), Sydney Bedrock capacity may be 85% utilized while Singapore capacity is 40% utilized due to the different time zone. Routing to Singapore maintains a 2–3 second response time rather than a 30+ second wait or timeout.

As AWS expands Bedrock availability to more regions over time, cross-region routing may become less frequent however this is a quickly evolving area and we are highly dependent on our vendors. However, load balancing across regions will remain a feature of high-availability service design.

Security Assessment Guidance

The following guidance is intended to help security and compliance teams determine how to treat cross-region AI inference risk within their organizations.

When This Risk Is Generally Acceptable

Cross-region inference within AWS is consistent with the risk posture of organizations that already use Qlik Cloud on AWS, accept momentary in-memory data exposure during inference (1–5 seconds), and rely on AWS's DPA for contractual data protection. It is appropriate where service availability and consistent performance are priorities.

Risk Mitigation Options

Organizations can reduce exposure by excluding highly sensitive fields from AI-enabled features using section access or field exclusions, or by pre-processing data before querying — for example, tokenizing PII or aggregating values.

When This Risk May Not Be Acceptable

Cross-region inference may be incompatible with data sovereignty laws that prohibit any cross-border processing (common in certain government and healthcare contexts), regulatory requirements that mandate processing within specific geographic boundaries, or threat models that treat any in-memory plaintext exposure during inference as unacceptable.

In these cases, use the tenant-level setting to disable cross-region data processing, which is the default setting for Qlik Cloud tenants.