Defining Cloudera connection parameters with Spark Universal

About this task

Talend Studio connects to a Cloudera cluster to run the Job from this cluster.

Procedure

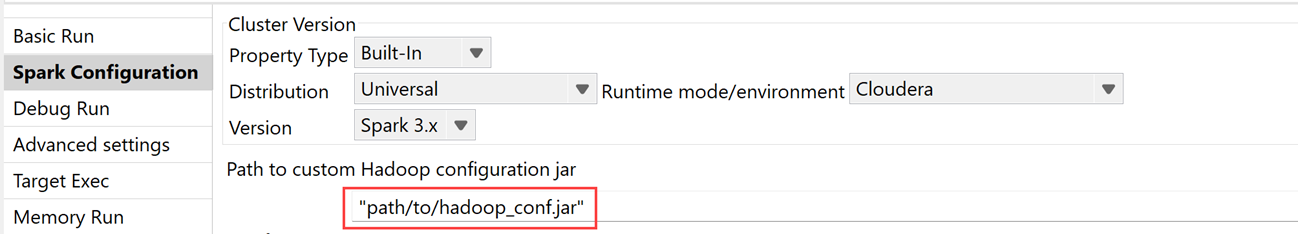

- Click the Run view beneath the design workspace, then click the Spark configuration view.

-

Select Built-in from the Property

type drop-down list.

If you have already set up the connection parameters in the Repository as explained in Centralizing a Hadoop connection, you can easily reuse it. To do this, select Repository from the Property type drop-down list, then click […] button to open the Repository Content dialog box and select the Hadoop connection to be used.Information noteTip: Setting up the connection in the Repository allows you to avoid configuring that connection each time you need it in the Spark configuration view of your Jobs. The fields are automatically filled.

-

Select Universal from the

Distribution drop-down list, the Spark version from the

Version drop-down list, and Cloudera

from the Runtime mode/environment drop-down list.

Cloudera mode supports only Spark 3.x version.

-

Enter the path to the Hadoop configuration JAR file with connection parameters for

your chosen Cloudera cluster.

The JAR file contains all the necessary information to establish the connection with all the *-site.xml files of the cluster.

The JAR file must include the following XML files:

The JAR file must include the following XML files:- hdfs-site.xml

- core-site.xml

- yarn-site.xml

- mapred-site.xml

If you use Hive or HBase components, the JAR file must include in addition the following XML files accordingly:- hive-site.xml

- hbase-site.xml

-

If you run your Spark Job on Windows, specify the location of the

winutils.exe program:

- If you want to use your own winutils.exe file, select the Define the Hadoop home directory check box and enter its folder path.

- Otherwise, leave the Define the Hadoop home directory check box clear. Talend Studio will generate and use a directory automatically for this Job.

-

Enter the basic configuration information:

Parameter Usage Use custom classpath Select this check box to specify additional classpath entries for Spark Jobs, allowing you to include custom libraries and dependencies when running on a YARN cluster. Use local timezone Select this check box to let Spark use the local time zone provided by the system. Information noteNote:- If you clear this check box, Spark use UTC time zone.

- Some components also have the Use local timezone for date check box. If you clear the check box from the component, it inherits time zone from the Spark configuration.

Use dataset API in migrated components Select this check box to let the components use Dataset (DS) API instead of Resilient Distributed Dataset (RDD) API: - If you select the check box, the components inside the Job run with DS which improves performance.

- If you clear the check box, the components inside the Job run with RDD which means the Job remains unchanged. This ensures the backwards compatibility.

This check box is selected by default, but if you import a Job from 7.3 backwards, the check box will be cleared as those Jobs run with RDD.

Information noteImportant: If your Job contains tDeltaLakeInput and tDeltaLakeOutput components, you must select this check box.Use timestamp for dataset components Select this check box to use java.sql.Timestamp for dates. Information noteNote: If you leave this check box clear, java.sql.Timestamp or java.sql.Date can be used depending on the pattern.Batch size (ms) Enter the time interval at the end of which the Spark Streaming Job reviews the source data to identify changes and processes the new micro batches. Define a streaming timeout (ms) Select this check box and in the field that is displayed, enter the time frame at the end of which the Spark Streaming Job automatically stops running. Information noteNote: If you are using Windows 10, it is recommended to set up a reasonable timeout to avoid Windows Service Wrapper to have issue when sending signal termination from Java applications. If you are facing such issue, you can also manually cancel the Job from your Azure Synapse workspace.Parallelize output files writing Select this checkbox to enable the Spark Batch Job to run multiple threads in parallel when writing output files. This option improves the performance of the execution time. When you leave this checkbox cleared, the output files are written sequentially in one thread.

On subJobs level, each subJob is treated sequentially. Only the output file inside the subJob is parallelized.

This option is only available for Spark Batch Jobs containing the following output components:- tAvroOutput

- tFileOutputDelimited (only when the Use dataset API in migrated components checkbox is selected)

- tFileOutputParquet

Information noteImportant: To avoid memory problems during the execution of the Job, you need to take into account the size of the files being written and the execution environment capacity before using this parameter. - Enter the authentication information by specifying your username. You can also use Kerberos to authenticate by selecting the Use Kerberos authentication checkbox.

-

Select the Set tuning properties check box to define the

tuning parameters, by following the process explained in Tuning Spark for Apache Spark Batch

Jobs.

Information noteImportant: You must define the tuning parameters otherwise you can get an error (400 - Bad request).

-

Select the Enable spark event logging check box to

enable the Spark application logs of the Job to be persistent in the file

system.

The parameters relevant to Spark logs are displayed:

- Compress Spark event logs: Select this check box to compress the logs.

- Spark event logs directory: Enter the directory in which Spark events are logged.

- Spark history server address: Enter the location of the history server.

Your cluster administrator may have set these properties in the configuration files. Contact the administrator to get the exact values.

-

In the Spark "scratch" directory field, enter the local

path where Talend Studio stores temporary files, like JARs to transfer.

If you run the Job on Windows, the default disk is C:. Leaving /tmp in this field will use C:/tmp as the directory.

-

Select the Wait for the Job to complete check box to

make Talend Studio or, if you use Talend JobServer, your Job JVM keep monitoring the Job until the execution of the Job is over.

By selecting this check box, you actually set the spark.yarn.submit.waitAppCompletion property to be true. While it is generally useful to select this check box when running a Spark Batch Job, it makes more sense to keep this check box clear when running a Spark Streaming Job.

-

To make your Job resilient to failure, select Activate

checkpointing to enable Spark’s checkpointing operation.

In the Checkpoint directory field, enter the cluster file system path where Spark saves context data, such as metadata and generated RDDs.

- In the Advanced properties table, add any Spark properties you want to override the defaults set by Talend Studio.

Results

Did this page help you?

If you find any issues with this page or its content – a typo, a missing step, or a technical error – please let us know!